Please use the form below to submit comments. Also provide an e-mail address and name. Your e-mail address and/or name will be used only to communicate with you about this or future comments you may submit. I am particularly keen to receive references to published material that contradicts the assertions and arguments I have made.

By submitting the above comment, I grant to Ross Alan Hangartner the right to incorporate the comment in full or in part, literally, paraphrased, or conceptually, as he sees fit, into State of Pain or other writings that he may create in the future. However, I don't grant permission to include my name or e-mail address, or to use them in any other way than to contact me for follow-up. I understand that by submitting the comment I acquire no right of any kind in State of Pain or other writings of Ross Alan Hangartner.

Last updated: Sat, Aug 17, 2024

In an earlier section (Selection Bias) I referred to a study, “Changes after Multidisciplinary Pain Treatment....” The researchers administered a large set of questionnaires to their subjects immediately following and again one year after they had completed a three-week, semi-residential treatment program for pain. The questionnaires asked about the subjects' level of pain, their level of functioning, their beliefs about their pain, and their strategies for coping with their pain.

A year after the treatment program, subjects' pain and functioning had both changed. For some subjects, pain had increased, for others it had decreased. For some functioning had improved, for others, it had become worse. The pain beliefs of the subjects had changed, and the strategies they used for coping with pain had changed.

The researchers were interested in the relationship between measures of well-being (pain and functioning) and measures of beliefs and coping strategies. Was there a consistent relationship between changes in functioning and certain beliefs about pain? Was there a consistent relationship between changes in functioning and changes in the coping strategies that were used?

The researchers were interested in these things because “identification of specific beliefs and coping responses that are most closely linked to change in functioning during this critical period could be useful to clinicians when deciding which beliefs and coping responses to target during treatment and during follow-up sessions....”1 In other words, if they could identify beliefs that lead to poor outcomes, they could try to change these beliefs. If they could identify coping strategies that lead to good outcomes, they could encourage patients to use those strategies.

The phrase “most closely linked” is important here. It acknowledges that a study like this can identify links among factors (well-being and beliefs, for example), but can't establish the nature of the links. Such links are often referred to as associations. The claim that belief A caused outcome X can't be proven based on the evidence collected. The evidence can't distinguish whether outcome X caused belief A or whether belief A caused outcome X. The authors acknowledge this in their discussion:

Although correlational analyses cannot be used to prove causality, they can distinguish variables that are more versus less likely to have a causal impact on functioning; consistently large and significant associations indicate that causal relationships remain possible, whereas consistently weak associations suggest the lack of causal relationships.

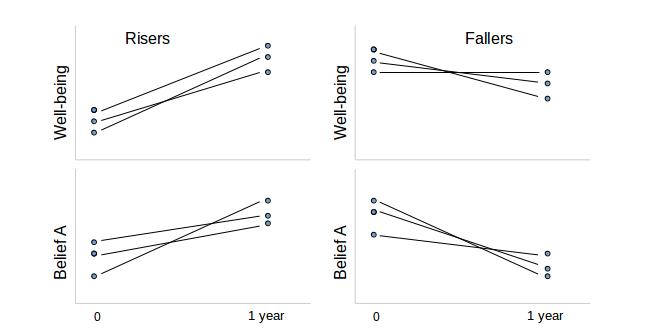

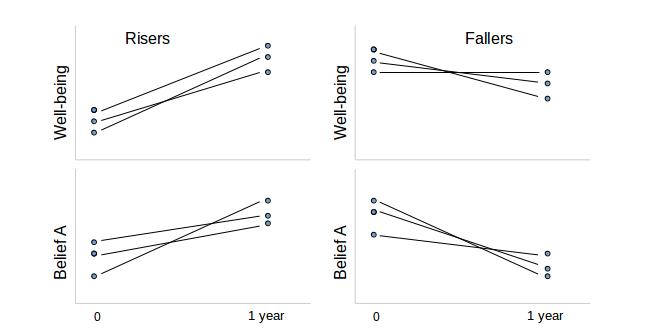

Although Figure 1: Some correlations isn't based on data from the study, it illustrates the idea of correlation as it applies to this study. The upper left-hand graph shows a group whose well-being scores were higher a year after the study. The lower left-hand graph shows the belief A scores for this same group. (Belief A might be something like, “I'm responsible for my own pain.”) Those also went up. The upper right-hand side of the figure shows a group whose well-being scores were lower a year after the study. Underneath that, the belief A scores also went down. When well-being went up, belief A went up, and when well-being went down, belief A went down. (You would say that “the change in well-being is correlated with the change in belief A.”) That's the meaning of correlation.

It is possible that belief A causes improved well-being, based on this fictitious example. However, it's also possible that improved well-being causes belief A.

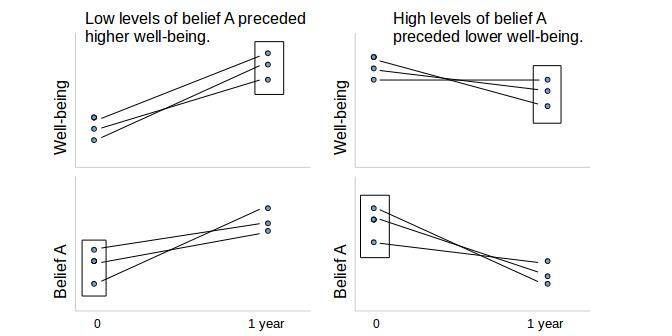

Could we prove that belief A causes improved well-being by looking at the data a different way? Suppose that we look at the group of people with a low level of belief A right after the therapy (the left-hand column) and compare them to the group with a high level of belief A right after the therapy (the right-hand column). How did these two groups fare a year later? (Figure 2 makes this comparison. It uses the same fictitious subjects as Figure 1 does.) It looks as if we may have proven that a high level of belief A leads to lower well-being a year later. This is, however, a deception.

To understand why, look at Figure 2. It can be explained simply by supposing that high well-being and belief A go together for any other reason than causality. To prove causality, it is necessary either to prove the mechanism by which one drives the other, or to establish that other theories of causality aren't tenable. (While you're looking at Figure 2: Correlation doesn't imply causality, reflect on how my suggestions about the meaning of the correlations affect your perception of the figure.)

The study authors profess that they don't purport to establish causality, so why am I making a production of this point? The quote from their discussion that I included above seems to me to belie their claim. Certainly there is no reason for a clinician to use these results as the authors have suggested unless beliefs and strategies cause changes in pain and functioning. If the converse is true, and changes in pain and functioning cause changes in patients' beliefs and strategies, I can't see what the value of this study would be.

This might raise the question in your mind as it does in mine, Why didn't they test causality?

Part of the reason may be that it is much more expensive to prove causation than correlation. Another part might be that it's so easy to slip from “correlation,” “linkage,” or “association” into the assumption of causality. The authors seem to assume that readers will assume causation, for whatever reason. This pattern is very common in behavioral pain research.